U.K. NATS Systems Failure

Join Date: Jan 2006

Location: Cyprus

Age: 76

Posts: 270

Likes: 0

Received 0 Likes

on

0 Posts

Walnut

Having just seen the CEO of NATS say a single piece of faulty data caused the meltdown I don’t believe him

i know from Airline sources that the problem was known at 0800, 4hrs before NATS admitted to the problem. That’s why flights were initially posted as being delayed before being canx around Midday Ie NATS covered up the problem.

Eventually circa 4hrs later around 1500 NATS announced they had fixed the problem. They hadn’t the bug had washed out because the system holds about 4hrs of data

So my analysis is that every time there Is a faulty input of data the system resets itself to protect itself. Fine but surely an airline when discovering its Flight Plan had been rejected will check and input its data correctly . So I believe a DOS input by a third party was re introduced causing the NATS system to go down again. As so many Flight Plans were being inputted it was very difficult to detect, only when NATS admitted there was a problem did the malevolent data ceased being inputted and it eventually washed out

So CEO please explain the delay

i know from Airline sources that the problem was known at 0800, 4hrs before NATS admitted to the problem. That’s why flights were initially posted as being delayed before being canx around Midday Ie NATS covered up the problem.

Eventually circa 4hrs later around 1500 NATS announced they had fixed the problem. They hadn’t the bug had washed out because the system holds about 4hrs of data

So my analysis is that every time there Is a faulty input of data the system resets itself to protect itself. Fine but surely an airline when discovering its Flight Plan had been rejected will check and input its data correctly . So I believe a DOS input by a third party was re introduced causing the NATS system to go down again. As so many Flight Plans were being inputted it was very difficult to detect, only when NATS admitted there was a problem did the malevolent data ceased being inputted and it eventually washed out

So CEO please explain the delay

I suspect that an underlying reason for the severity of this breakdown was that the ATC System has been quietly ‘redlining’ for some time. The post Covid boom in travel numbers is now making the decisions Airlines made wrt putting more emphasis on narrow-bodies look very suspect. The math is simple; given number of passengers, smaller A/C more movements.

An it is a problem that will take quite some time to unscramble.

An it is a problem that will take quite some time to unscramble.

Join Date: Oct 2004

Location: Southern England

Posts: 483

Likes: 0

Received 0 Likes

on

0 Posts

Having just seen the CEO of NATS say a single piece of faulty data caused the meltdown I don’t believe him

i know from Airline sources that the problem was known at 0800, 4hrs before NATS admitted to the problem. That’s why flights were initially posted as being delayed before being canx around Midday Ie NATS covered up the problem.

Eventually circa 4hrs later around 1500 NATS announced they had fixed the problem. They hadn’t the bug had washed out because the system holds about 4hrs of data

So my analysis is that every time there Is a faulty input of data the system resets itself to protect itself. Fine but surely an airline when discovering its Flight Plan had been rejected will check and input its data correctly . So I believe a DOS input by a third party was re introduced causing the NATS system to go down again. As so many Flight Plans were being inputted it was very difficult to detect, only when NATS admitted there was a problem did the malevolent data ceased being inputted and it eventually washed out

So CEO please explain the delay

i know from Airline sources that the problem was known at 0800, 4hrs before NATS admitted to the problem. That’s why flights were initially posted as being delayed before being canx around Midday Ie NATS covered up the problem.

Eventually circa 4hrs later around 1500 NATS announced they had fixed the problem. They hadn’t the bug had washed out because the system holds about 4hrs of data

So my analysis is that every time there Is a faulty input of data the system resets itself to protect itself. Fine but surely an airline when discovering its Flight Plan had been rejected will check and input its data correctly . So I believe a DOS input by a third party was re introduced causing the NATS system to go down again. As so many Flight Plans were being inputted it was very difficult to detect, only when NATS admitted there was a problem did the malevolent data ceased being inputted and it eventually washed out

So CEO please explain the delay

The NATS systems beyond the one we are assuming was the issue also have a buffer of data of up to 4 hours. This data will start to go stale as amendments, coordinations and new plans don't arrive but is a good basis to continue to operate without flow if you expect the technical issue to be fixed. At some point somebody will decide it isn't coming back and will make the call to impose flow. That was done before 10:00 UTC although it was about 2 hours later before NATS itself published anything.

Eventually all will be in the public domain however much anybody tries to stop it so why would they bother to lie?

So the flight plan was fine for Eurocontrol and other systems but not for NATS. And NATS uses a modified US software for flight handling which likely isn’t the same as Eurocontrol et al? But this system has worked flawlessly until now? Does that point to something (software/firmware?) having changed recently in Euro land that hasn’t been changed here thus causing the failure?

Given the importance of this system you'd have to hope there's a decent investigation and full public report that would reveal both the cause as well as the underlaying products in use, along with how redundancy is managed. To my mind there are certain things that bear critical scrutiny; with decent information this should allay unhelpful speculation, but allow competent people to assess the risk faced in being involved with said system.

FP.

Join Date: Oct 2004

Location: Southern England

Posts: 483

Likes: 0

Received 0 Likes

on

0 Posts

Last time there was an "independent" review & a public report which is still available on the CAA website. And a Parliamentary Select Committee hearing which it would be fair to say didn't really add much of value. This incident was orders of magnitude more disruptive so you'd expect at least the same although this Government isn't too hot on transparency and rather dismissive of experts.

There has to be a system whereby the ANSP is penalised, in favour of the airlines, not the Exchequer, tricky to design & implement, but surely doable, a formula based initially on minutes delay, distributed on the grounds of impact on individual airlines, not necessarily the largest operators but those on whom the event has had the largest impact - most importantly a charge that the ANSP is not simply able to claw back from the airlines in future.

Join Date: Oct 2004

Location: Southern England

Posts: 483

Likes: 0

Received 0 Likes

on

0 Posts

The NATS licence includes a penalty scheme whereby a certain level of delay triggers a reduction in future charges. It is deliberately not punitive to avoid influence on operational decision making so it is unlikely to cover the airline's costs.

Join Date: Nov 2018

Location: UK

Posts: 82

Likes: 0

Received 0 Likes

on

0 Posts

Never listen to the very vocal Irishmen, if you follow their logic, that of MOL at least , we should all work 12h a day . 7 days a week for half of the money so that his airline can make more profit. But on cost of back up systems you take the problem the wrong way . a functioning back up in an ATC Centre is part of the system , from the outset and this regardless of the end price. . You do not buy a system and later ask for a back up.

That said, today if you have a very stable modern advanced system build bay any of the well known established manufacturers , with good preventive maintenance and well paid technical staff doing the upgrades when necessary your back up system is likely to be used only some minutes a year. Also we do not have back up system to only maintain capacity, it is primarily to maintain the same level of safety. In ATC we are in the safety business, even if our vocal Irishmen claim we are there to provide endless capacity and no delays.

In this incident safety was not compromised ( at least as far as I heard) , it caused delays, diversions and cancellations , but this is a capacity consequence , not a safety one.,

What caused the system to crash is not really the important issue, Nice to know to learn and prevent it from happening again , but why it took so long to restart the primary system is the question I would like to ask if I had the chance.

That said, today if you have a very stable modern advanced system build bay any of the well known established manufacturers , with good preventive maintenance and well paid technical staff doing the upgrades when necessary your back up system is likely to be used only some minutes a year. Also we do not have back up system to only maintain capacity, it is primarily to maintain the same level of safety. In ATC we are in the safety business, even if our vocal Irishmen claim we are there to provide endless capacity and no delays.

In this incident safety was not compromised ( at least as far as I heard) , it caused delays, diversions and cancellations , but this is a capacity consequence , not a safety one.,

What caused the system to crash is not really the important issue, Nice to know to learn and prevent it from happening again , but why it took so long to restart the primary system is the question I would like to ask if I had the chance.

The other serious managerial error was stopping the backup that used to occur through the Prestwick Centre, in the same way that Eurocontrol does (see above), but 'the two centres went their separate ways...'

Join Date: Nov 2018

Location: UK

Posts: 82

Likes: 0

Received 0 Likes

on

0 Posts

'Having just seen the CEO of NATS say a single piece of faulty data caused the meltdown I don’t believe him

i know from Airline sources that the problem was known at 0800, 4hrs before NATS admitted to the problem. That’s why flights were initially posted as being delayed before being canx around Midday Ie NATS covered up the problem.

Eventually circa 4hrs later around 1500 NATS announced they had fixed the problem. They hadn’t the bug had washed out because the system holds about 4hrs of data...So CEO please explain the delay'

Exactly - he can't (without removing the cover up).

i know from Airline sources that the problem was known at 0800, 4hrs before NATS admitted to the problem. That’s why flights were initially posted as being delayed before being canx around Midday Ie NATS covered up the problem.

Eventually circa 4hrs later around 1500 NATS announced they had fixed the problem. They hadn’t the bug had washed out because the system holds about 4hrs of data...So CEO please explain the delay'

Exactly - he can't (without removing the cover up).

This is clearly a plausible explanation, but at first glance it doesn't seem as complex a task as you suggest

Whilst there are clearly an infinite number of locations, lots of them can be ruled out simply. Anything outside of bounding boxes for Shanwick, Scottish and London can go immediately. Within that you've got bounding boxes for the areas controlled and only then do you actually have to start considering the real geography. The graph of controllers must be fairly small (otherwise the crew would be constantly on the radio) and the number of possible graphs isn't that big (in computer terms), so anything getting stuck should be detectable. Your satnav can cope with a much bigger problem.

Whilst there are clearly an infinite number of locations, lots of them can be ruled out simply. Anything outside of bounding boxes for Shanwick, Scottish and London can go immediately. Within that you've got bounding boxes for the areas controlled and only then do you actually have to start considering the real geography. The graph of controllers must be fairly small (otherwise the crew would be constantly on the radio) and the number of possible graphs isn't that big (in computer terms), so anything getting stuck should be detectable. Your satnav can cope with a much bigger problem.

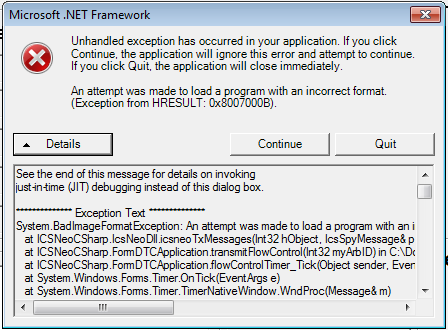

The amount of data and the complexity of the calculations / algorithm to do comprehensive - even exhaustive - checking may indeed be relatively trivial for a modern system, but the NATS system doesn't seem like that from what I've read. It appears to be an older (ancient?) system re-hosted onto a more modern platform.

The NATS base system may, for example, be a 32-bit architecture and simply unable to use 'modern' amounts of memory (4GB) no matter the capacity of the hosting platform. Or it may be that (parts of) the system are inherently single-threaded, so that it matters not how many CPU instances / threads you throw at it, it only goes as fast as a single CPU. Or perhaps the overheads of emulating the original code on a less elderly system, which is in turn being emulated on a quite modern platform, are such as to ensure that the best you can hope for is 'quite a lot faster' than the original implementation, but nothing approaching a modern definition of 'high performance'.

Or if you're really lucky, all three apply.

The only actual solution to such a problem is to rip out the old system and replace it, and that is one of those Major I.T. Systems Project behemoths that are rightly feared. They are inevitably extremely high profile, politically sensitive, long duration, extraordinarily complex with many many stakeholders, and extremely risky.

Join Date: Jul 2013

Location: Within AM radio broadcast range of downtown Chicago

Age: 71

Posts: 851

Received 0 Likes

on

0 Posts

The only actual solution to such a problem is to rip out the old system and replace it, and that is one of those Major I.T. Systems Project behemoths that are rightly feared. They are inevitably extremely high profile, politically sensitive, long duration, extraordinarily complex with many many stakeholders, and extremely risky.

But would it be worse than the aftermath of a strong cybersecurity breach, or cyber attack? A version of, "if you think safety is expensive, try having an accident"

Join Date: Nov 2018

Location: UK

Posts: 82

Likes: 0

Received 0 Likes

on

0 Posts

You would think so, but as a PPP (not a publicly listed entity, or a government department/agency) NATS considers that it is NOT bound by the FOI Act, and therefore since the establishment of the Act (2000) 'picks and chooses' which FOI subject access requests (requests for information) it responds to. You can bet your pension that NATS won't be responding to FOI requests on this one!

Join Date: Nov 2018

Location: UK

Posts: 82

Likes: 0

Received 0 Likes

on

0 Posts

The only actual solution to such a problem is to rip out the old system and replace it, and that is one of those Major I.T. Systems Project behemoths that are rightly feared. They are inevitably extremely high profile, politically sensitive, long duration, extraordinarily complex with many many stakeholders, and extremely risky.

todays "Times" notes that they're upping the money they (the shareholders - the Govt & the airlines) take out as dividends and reducing the amount they planned to invest...............

Join Date: Oct 2004

Location: Southern England

Posts: 483

Likes: 0

Received 0 Likes

on

0 Posts

NATS investment is not from the public purse. One of the reasons it was privatised was to remove it's borrowing requirements from the accounts in the days when the Public Sector Borrowing Requirements figure was thought to be important and Government hadn't realised it could just keep printing money and nobody actually cared.

It has been investing a lot since privatisation and continues to do so, whether all that investment has been wise is difficult to judge but it's kept a lot of people employed for a while.

If it was NAS that failed then NATS has been trying to replace that system since the 90s and has failed to do so, it's a bit like trying to replace the foundations on a tower block whilst keeping the block intact because it's so integrated into all NATS systems

Having finally realised that couldn't be done it embarked on replacing Both NAS and the overlying systems a few years ago. It's a huge project that does literally rip it out & start again.

It has been investing a lot since privatisation and continues to do so, whether all that investment has been wise is difficult to judge but it's kept a lot of people employed for a while.

If it was NAS that failed then NATS has been trying to replace that system since the 90s and has failed to do so, it's a bit like trying to replace the foundations on a tower block whilst keeping the block intact because it's so integrated into all NATS systems

Having finally realised that couldn't be done it embarked on replacing Both NAS and the overlying systems a few years ago. It's a huge project that does literally rip it out & start again.