Boeing Resisted Pilots Calls for Steps On MAX

Plastic PPRuNer

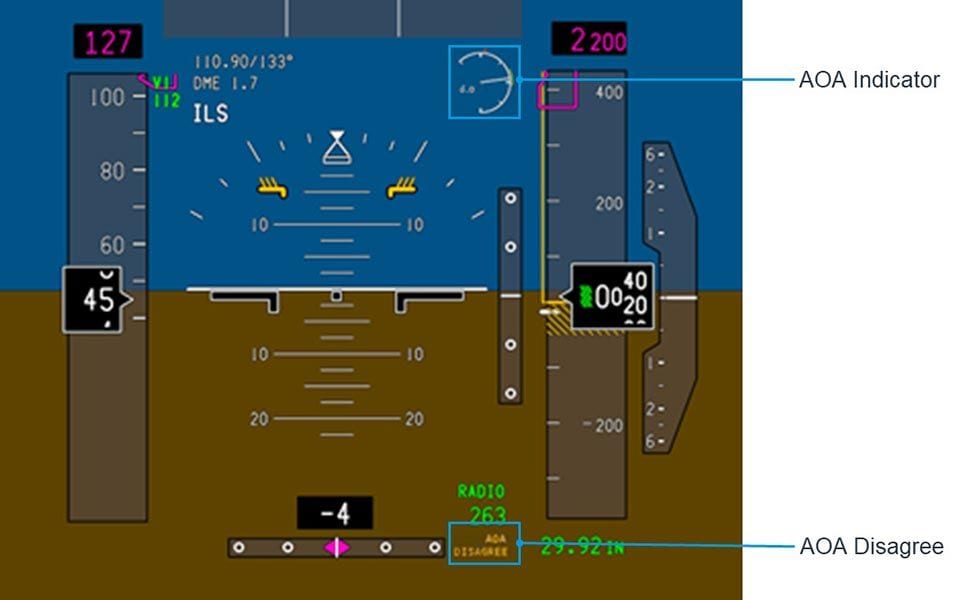

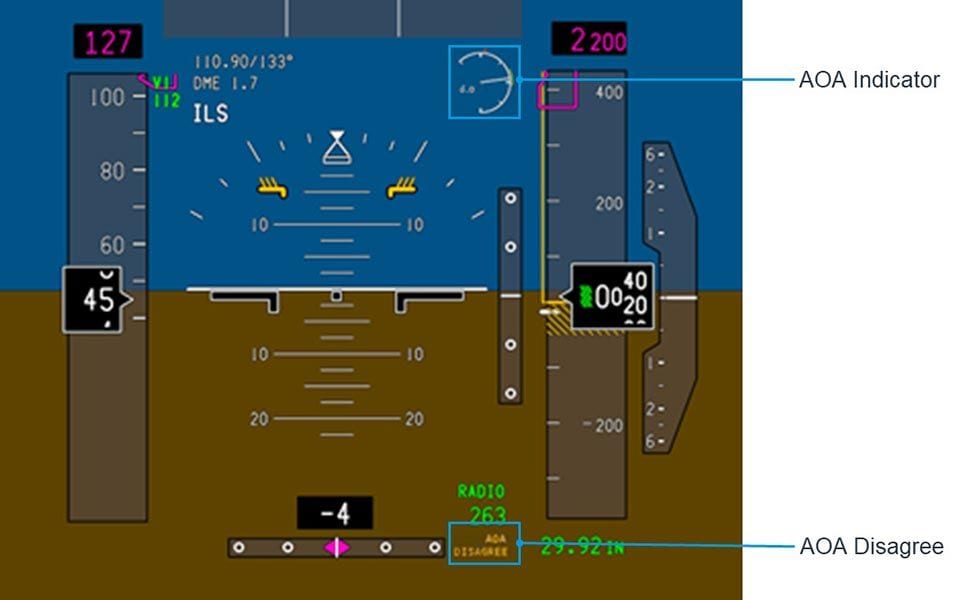

The "AOA DISAGREE" indication is at the bottom of the screen, in minuscule amber letters on a brown background. It is barely legible in the photo and certainly not likely to attract much attention, particularly if the aircraft is behaving in other distracting ways. Indeed, not only were the crew poorly-informed about MCAS, they certainly would be unlikely to link this to a miniature "AOA disagree" sign. Even if it flashes (does it?); to say proudly that "WE have an "AOA disagree!" warning light", is one of the most pathetic excuses that I have ever heard (and believe me, I've heard a lot).

I too, have an interest in safety, although of a rather different kind.

Mac

Join Date: Aug 2002

Location: Castletown

Posts: 241

Likes: 0

Received 0 Likes

on

0 Posts

The "AOA DISAGREE" indication is at the bottom of the screen, in minuscule amber letters on a brown background. It is barely legible in the photo and certainly not likely to attract much attention, particularly if the aircraft is behaving in other distracting ways. Indeed, not only were the crew poorly-informed about MCAS, they certainly would be unlikely to link this to a miniature "AOA disagree" sign. Even if it flashes (does it?); to say proudly that "WE have an "AOA disagree!" warning light", is one of the most pathetic excuses that I have ever heard (and believe me, I've heard a lot).

I too, have an interest in safety, although of a rather different kind.

Mac

It�s practically useless.

Psychophysiological entity

This is the warning that's not activated unless you've paid $80,000 for the AoA upgrade, is it?

Join Date: Aug 2002

Location: Castletown

Posts: 241

Likes: 0

Received 0 Likes

on

0 Posts

Correct, but the OM systems description (I think) led pilots (Airlines) to think this was part of a basic offering from the lazy "B", but I may be incorrect in my assumption, so take it with a pinch of salt or with your best wee dram.

Join Date: Jan 2008

Location: Singapore

Posts: 16

Likes: 0

Received 0 Likes

on

0 Posts

How would you interpret this statement?

"This is done in recognition that there are airline pilots that are not capable of recognizing and mitigating certain system failures. It is a shame that it took two accidents with many fatalities to understand this, but it is not fair or reasonable to assert that Boeing and the FAA should have known the exact point when systems would become too complex for certain pilots."

"This is done in recognition that there are airline pilots that are not capable of recognizing and mitigating certain system failures. It is a shame that it took two accidents with many fatalities to understand this, but it is not fair or reasonable to assert that Boeing and the FAA should have known the exact point when systems would become too complex for certain pilots."

Psychophysiological entity

Yes, the AoA warning system was supposed to be part of the basic deal - not being evident all times didn't surprise anyone. Press to Test days are not what they used to be.

One logical reason for it being where it is I suppose, is because it's by the Rad Height. Late-stage slowish flight might well have the scan going to that corner of the screen. (I've taken this from Mac's recent picture) I can't see any other justification for splitting it from the AoA dial symbol.

One logical reason for it being where it is I suppose, is because it's by the Rad Height. Late-stage slowish flight might well have the scan going to that corner of the screen. (I've taken this from Mac's recent picture) I can't see any other justification for splitting it from the AoA dial symbol.

PJ2, you absolutely sure? Three AOA inputs and it still managed to cause issues that ended with the Captain retiring because of PTSD.

https://www.atsb.gov.au/media/3532398/ao2008070.pdf

https://www.atsb.gov.au/media/3532398/ao2008070.pdf

The author of the thesis is not unknown in the world of engineering test pilots.

http://hoh-aero.com/home.htm

http://hoh-aero.com/FAA%20Rudder/AIA...er%20Paper.pdf

http://hoh-aero.com/home.htm

http://hoh-aero.com/FAA%20Rudder/AIA...er%20Paper.pdf

A crossroads of a sort is emerging: "How much automation, how much pilot?". Are systems really "too complex"? Are pilots in over their head when confronted with normal failures, (vice outliers such as QF72 and the two recent B737M accidents). Is training falling down on the job? Is the standards and checking process not rigourous enough or afraid to confront weak candidates?...or both?

I think that the points raised, (about complexity and pilot capacity) hint at taking autonomous passenger flights seriously. They raise questions concerning the possibility of software engineering's capacity to address human factors/human behaviour issues.

It has been raised before in PPRuNe: Could software have dealt with QF32 with equal success? With UA232? With Colgan 3407? With USAirways 1549?, etc. If not, where is the line between pilot and software? What are the saves vs. the failures? The questions need to be addressed by both pilots and the engineers together.

Clearly, this requires far greater work and thought than the space available here, but these are the questions raised when one thinks about "training vs. software" solutions to technical, and human factors failures.

To begin, no matter how strange or confusing any of the transports I flew were when the question, "What's it doing now?" arose, every type was always flyable when everything was disconnected. I think that is really worth something. I think that is a huge testimony to the aeronautical engineers and system designers in terms of flyability and redundancy and in terms of graceful failures.

Further, I believe that that is the experience of 99% of the world's airline crews, not because I have the data obviously, but because I view my experience as a kind of median - a down-the-middle experience with a few interesting outliers and a couple of serious challenges which turned out okay but had the potential for alternative endings.

So to your points if I may, neither Mr Hoh's considerable qualifications as an engineer and test pilot which are certainly impressive, nor the QF72 accident example and it's unhappy result, are counter-examples for what I am trying to convey.

From the WSJ OpEd Mr. Hoh has written:

Many current airline pilots are simply not up to the standard necessary to operate current systems. With that in mind, the airline industry—not just Boeing—needs to lower expectations related to pilot competency in designing systems and dealing with failures.

I include experience and a minimum knowledge base as qualifying conditions for an airline pilot to meet, and if, given this, things go wrong, we may look to human factors for cause and potential solutions. Clearly, automation (& FBW) software works with astonishing reliability and with resilience; it is a demonstrable enhancement to flight safety - I know this having flown the types, (Airbus in my case), for a decade and a half. There were no encounters when the airplane's software prevented appropriate pilot action and to Mr Hoh's point, I also know there were "software saves".

In fact I think we might find more in common regarding our views on system complexity because I don't think he would disagree with the fundamental requirement for thorough, validated training and checking (auditing), and the old principle of knowing one's airplane as thoroughly as available manufacturer information permits. This is all I am emphasizing.

I know very well that it is not possible to know one's airplane at the nuts-and-bolts level, nor is it possible for anyone, let alone pilots, to know that software, like an algorithm, is 100% predictable and reliable under all conditions. As pilots, we operate far above that level, using software-engineered solutions as tools that are expected to work all the time. Pilots should never be expected to be the troubleshooters of bad sofware design.

Mr. Hoh appears to claiming that there is an increasing gap between the engineers that create aircraft systems and today's pilots' overall, (worldwide) capacity to understand the engineers' complex designs/systems. I submit two initial observations regarding this view: 1) that most pilots not comprehending aircraft complex systems is trivially obvious and, 2) that "more software cannot" fix a problem which may be software-originated and which instead has become a human factors matter, not a software matter.

It is not possible to verify or even comprehend software in the way that we normally can see and comprehend mechanical systems. Software is pure design without necessary & verifiable principles of operation*. It's outcomes are not 100% predictable nor can its resilience / brittleness tested for certainty. If there is a glaring example of this potential for capricious behaviour in what is normally an extremely stable system, it is precisely the QF72 PRIM failure being cited above, and the airplane survived the fault.

* Software reliability and dependability, Littlewood, Strigini

I certainly would not be calling for a lowering of expectations of pilots by the manufacturers.

In billions of hours of flight since the 80's, aircraft system design has presented a genuinely insoluble problem for flight crews only a few times, far, far lower than similiar occurrences in other complex systems such as healthcare, automobile engineering and of course space flight. So designers & software engineers can not have a full and complete comprehension of the software systems they design. It has been understood for a very long time that it is not possible to know all variations in software system design. NTK training arose out of this factor and aircraft manuals began refocussing or thinning out system descriptions.

To use an old expression, I think that Mr Hoh's article is "throwing the baby out with the bathwater".

One an hardly argue against the success of automation and the high level of operational safety it has yielded since its introduction in the late 80's, but one is hard-pressed to argue that AI, robotics, or engineering solutions can encompass human creativity for doing the wrong thing for the "right" reasons.

PJ2

Last edited by PJ2; 19th May 2019 at 17:18.

How much automation, how much pilot?

CAUSES: "The cause of the accident was the activation of the angle of attack protection system which, under a particular combination of vertical gusts and windshear and the simultaneous actions of both crew members on the sidesticks, not considered in the design, prevented the aeroplane from pitching up and flaring during the landing."

Join Date: Dec 2001

Location: Leeds, UK

Posts: 281

Likes: 0

Received 0 Likes

on

0 Posts

I don't think the issue here is too much automation, or too wide a gap between manufacturing engineers and pilots.

I'm quite certain the engineers that designed and built MCAS screamed loud and clear years ago that the design was flawed relying on one AOA input, having no display for errors/activation, and for using a primary flight control surface to add some pressure to the yoke.

And I think it's obvious higher-ups overruled the grease monkey engineers and told them it would have to go in as it was, and yesterday, due to commercial pressures. I just hope those engineers have printed off copies of emails of being overruled, as the Engineers will be seen as disposable fodder and thrown under the wheels of the bus. They need insurance..

Just like Pilots get dispatch pressure to depart with a dodgy airplane/weather, so engineers get overruled on bad design.

G

I'm quite certain the engineers that designed and built MCAS screamed loud and clear years ago that the design was flawed relying on one AOA input, having no display for errors/activation, and for using a primary flight control surface to add some pressure to the yoke.

And I think it's obvious higher-ups overruled the grease monkey engineers and told them it would have to go in as it was, and yesterday, due to commercial pressures. I just hope those engineers have printed off copies of emails of being overruled, as the Engineers will be seen as disposable fodder and thrown under the wheels of the bus. They need insurance..

Just like Pilots get dispatch pressure to depart with a dodgy airplane/weather, so engineers get overruled on bad design.

G

Join Date: May 2010

Location: Boston

Age: 73

Posts: 443

Likes: 0

Received 0 Likes

on

0 Posts

Automation is as much subject to failure as is the pilot, for the simple reason that the automation is designed by a human. An A320 that wouldn't let the crew flare for landing, writing off the airframe.

http://www.fomento.es/NR/rdonlyres/8...006_A_ENG1.pdf

http://www.fomento.es/NR/rdonlyres/8...006_A_ENG1.pdf

The problem then is balancing the two and retaining the best features of both. One problem I sense is that training has devolved into attempts to have pilots behave as automatons with no experience in dealing with situations outside of the expected failure scenarios.

An argument could be made that most things covered by detailed checklists could in fact be automated.

Plastic PPRuNer

What an excellent post by PJ2!

Without getting too anatomorphic the human body and it's autofunctions (high blood-sugar > release insulin) can be compared to a modern aircraft and consciousness to the pilot.

The pilot only becomes aware of the autofunctions when needed (hungry > need to eat > find somewhere to eat) - only then does the pilot need to intervene ("am I REALLY short of gas? > yes/no? - tank transfer failure > fix. Tanks dangerously empty > find nearest airfield [the whys and wherefores can wait]

The aircraft is designed to (more or less) fly itself from A to B, and by design does not tell the pilot the minutiae of what it is doing from moment to moment. Currently only then things get really out of hand does the aircraft inform a rather startled pilot what it has been up to in order to continue flying "as normal" (like autotrim and autothrottle), stops being "clever" and suddenly hands the uninformed pilot a very unstable aircraft at the limits of it's autocapabilities.

The flight-deck is overwhelmed with alarms, some important, others less important, that the pilot has to very rapidly prioritise and deal with appropriately. No wonder s/she does not always do "the Right Thing".

Only very experienced pilots, the Bob Hoover's, de Crespigniny's (with help) and Chuck Yeager's, who are thoroughly familiar with the aircraft that they are flying and with extensive non-auto training can deal with these highly abnormal situations. Just like surgeons in an totally unexpected event.

Few pilots can be trained to such levels and no automation can forsee and deal with every situation.

We must just try and get smarter automation that keeps the pilot better informed and pilots who are better equipped to deal with such situations.

But life being what it is, every year or so we must accept that such events will occur and that we cannot reduce this to zero.

Mac

Without getting too anatomorphic the human body and it's autofunctions (high blood-sugar > release insulin) can be compared to a modern aircraft and consciousness to the pilot.

The pilot only becomes aware of the autofunctions when needed (hungry > need to eat > find somewhere to eat) - only then does the pilot need to intervene ("am I REALLY short of gas? > yes/no? - tank transfer failure > fix. Tanks dangerously empty > find nearest airfield [the whys and wherefores can wait]

The aircraft is designed to (more or less) fly itself from A to B, and by design does not tell the pilot the minutiae of what it is doing from moment to moment. Currently only then things get really out of hand does the aircraft inform a rather startled pilot what it has been up to in order to continue flying "as normal" (like autotrim and autothrottle), stops being "clever" and suddenly hands the uninformed pilot a very unstable aircraft at the limits of it's autocapabilities.

The flight-deck is overwhelmed with alarms, some important, others less important, that the pilot has to very rapidly prioritise and deal with appropriately. No wonder s/she does not always do "the Right Thing".

Only very experienced pilots, the Bob Hoover's, de Crespigniny's (with help) and Chuck Yeager's, who are thoroughly familiar with the aircraft that they are flying and with extensive non-auto training can deal with these highly abnormal situations. Just like surgeons in an totally unexpected event.

Few pilots can be trained to such levels and no automation can forsee and deal with every situation.

We must just try and get smarter automation that keeps the pilot better informed and pilots who are better equipped to deal with such situations.

But life being what it is, every year or so we must accept that such events will occur and that we cannot reduce this to zero.

Mac

@PJ2: as ever, great input. (Bolding Mine)

In fact I think we might find more in common regarding our views on system complexity because I don't think he would disagree with the fundamental requirement for thorough, validated training and checking (auditing), and the old principle of knowing one's airplane as thoroughly as available manufacturer information permits.

I know very well that it is not possible to know one's airplane at the nuts-and-bolts level, nor is it possible for anyone, let alone pilots, to know that software, like an algorithm, is 100% predictable and reliable under all conditions. As pilots, we operate far above that level, using software-engineered solutions as tools that are expected to work all the time. Pilots should never be expected to be the troubleshooters of bad sofware design.

I know very well that it is not possible to know one's airplane at the nuts-and-bolts level, nor is it possible for anyone, let alone pilots, to know that software, like an algorithm, is 100% predictable and reliable under all conditions. As pilots, we operate far above that level, using software-engineered solutions as tools that are expected to work all the time. Pilots should never be expected to be the troubleshooters of bad sofware design.

Join Date: Nov 2007

Location: dublin

Posts: 2

Likes: 0

Received 0 Likes

on

0 Posts

So, you look down at the trim wheel and it is not turning. Is it continuously trimming ND, with some pauses, or not? If you watch it, continuously, for six seconds (ignoring everything else making noises to try and attract your attention) you may get an answer. You can lose a lot of height in six seconds, at those speeds.

Then it does it again. Then YOU LOOK AT GREEN BAND and it�s trimming you into an unrecoverable dive trim scenario.

Counter trim back to mid range green band and STAB S/Ws OFF.

�at those speeds.....�? Speed should have been low as per UAS NNP.

Join Date: Aug 2007

Location: Alabama

Age: 58

Posts: 366

Likes: 0

Received 0 Likes

on

0 Posts

such kind of replies makes wonder about your other posts

Join Date: May 2019

Location: Somewhere over the rainbow...

Posts: 0

Likes: 0

Received 0 Likes

on

0 Posts

)

)